Critically assess the risks of liability in negligence arising from the use of artificial intelligence (AI)

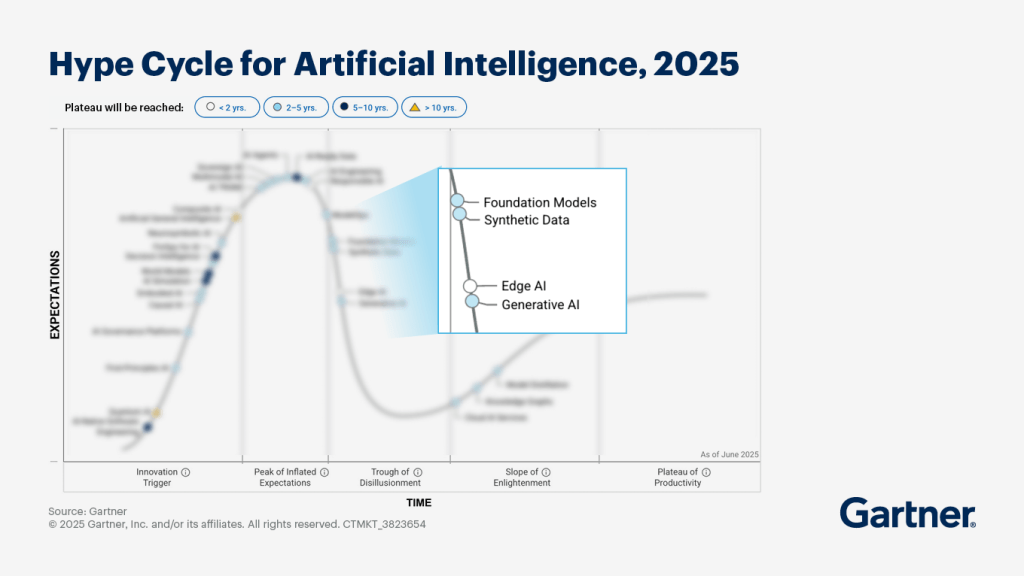

Forget the festive season, it’s an ‘AI Summer’. The availability of ‘Big Data’, affordable hardware and strong commercial interests has allowed Artificial Intelligence (AI) to mature and various AI technologies are peaking in their hype cycles.[1] AI use is prevalent across industries, in the workplace, at home and on the go. In December 2025 alone, Time magazine named ‘The Architects of AI’ their ‘Person of the Year’ 2025;[2] Donald Trump signed an Executive Order to establish a Federal policy framework and remove legal barriers at state level around AI governance;[3] and the UK government unveiled plans for further AI ‘Growth Zones’ to expand the UK capacity for AI data centres[4] supporting plans to leverage AI for economic growth.[5] Moore’s law may be dead but AI is showing no signs of slowing down.[6] However, in 2023, prominent individuals and institutions in the AI conversation signed an open letter requesting exactly that; calling for AI development to slow down and highlighting the ripe need for ‘independent review’[7] noting extensive criticism of lawmakers’ failure to protect individuals from the impact of AI.[8] There has long been concern around AI-specific legal challenges such as; infringement upon the rights of intellectual property owners and personal data subjects; automated decision-making embedded with bias and discrimination; and potential misuse by bad actors.

One issue with “AI” is that it has become both a marketing buzzword and an umbrella term. There are three main types of AI; agentic, generative and predictive modelling. The use of predictive modelling has been embedded in commercial data analytics to social media algorithms for a long time. In the last few years, Agentic AI has become increasingly popular in organisation’s workflows such as government application checks[9]. Very recently, Generative AI has become much discussed due to the availability of Large-Language Models such as ChatGPT, Gemini and Deepseek. The potential applications of AI have been both heavily championed and criticised. It has been suggested that AI could revolutionise industries, reduce human-capital costs for businesses and to the individual, and generally catalyse automation throughout human life.[10] The flipside to many of these opportunities is the risk of arms races, job losses[11] and the general introduction of automation which does not align with human values.

AI governance is relatively immature though some early models have been proposed. The models commonly highlight the importance of principals centred around transparency, responsibility and the need for proportionate control on self-improving technologies.[12] Some jurisdictions are bringing in legislation to address high-risk uses such as integration in safety systems or automated decision-making in employment and law-enforcement whilst others, such as the US, are keen to limit regulation around AI to avoid stifling economic growth.[13] The risks of AI and current lack of adequate regulation poses significant challenges in many areas of law and already a body of case law has begun to emerge across jurisdictions: one area that has already presented some interesting examples of AI risks and drawn scrutiny is ‘negligence’.

Negligence & AI

Generally, reasonable persons would be likely to avoid consulting or relying on advice from sources known to respond with information which is known to suffer from bias, errors and outright hallucinations. Despite this, Generative AI has become commonly-used in formal environments, work-product and even in court and tribunals.[14] In an English case earlier this year, a former solicitor appealed the decision to strike him off the roll of solicitors by presenting legal authorities including 25 non-existent cases.[15] Historical speculation that AI may be capable of circumventing rules has been realised with speculation that malicious prompts could be designed to ‘jailbreak’ AI and produce “unintended behabiour”.[16]This poses challenges in Tort Law to apply the current legal tests for negligence to potential scenarios facilitated by AI. Negligence is an area of law which had developed incrementally,[17] however, issues such as potential liability gaps and applying the ‘forseeability test’ have highlighted the need for clarification and possibly reform.

Negligence typically arises where one party has suffered injury or a loss due to the conduct of another party. This could materialise in myriad ways; during medical surgeries, the official functions of a public body, health and safety in the workplace or relying on advice of professional firms. To establish negligence it must be shown that one party owed a duty of care to another which was breached causing in a loss or injury. A duty of care could materialise in an employer’s statutory duty of to protect the health and safety of workers,[18] a contractual agreement to provide reliable advice between professional firms, the common law duty of healthcare providers[19] to name a few. Pursuers will generally need to demonstrate that the defender has breached their duty of care resulting in the loss. In assessing whether the duty of care has been breached consideration will be given to factors including remoteness, reasonable foreseeability[20] and intervening acts. There should be sufficient proximity[21] and knowledge of risk[22]. These tests are essential to ensure that liability is attributed and apportioned correctly to the relevant parties. These tests may be qualified by valid justifications for excluding liability in negligence[23] such as limiting scope to ensure the floodgates are not opened every time that a loss occurs; in order to protect public interest or avoid stifling innovation.[24]

In light of the legal tests, there are several hurdles where a claim involving AI could fall down. Firstly, AI does not have its own separate legal personality and cannot therefore assume liability. Throughout the AI Lifecycle, inputs are sourced from many humans and various attached legal personalities. AI processing occurs in a ‘black box’ environment before outputs are generated and these ‘hidden layers’ in decision-making may make it difficult to identify the source of errors or attribute them to a party. The lack of transparency and this ‘responsibility gap’ could make it challenging to establish causation or assess intervening acts.[25] This risk is particularly acute in iterative AI where, over time, the model could become quite departed from the coding inputs of programmers and supervised by users with a limited capacity to understand the resulting outputs.[26] It does not seem feasible for losses involving decisions made by AI to be assessed comprehensively due to the sheer volume of data, lack of transparency in decision-making exacerbated by the responsive potential of AI. There is also specific concern that companies could potentially weaponise the AI ‘black box’ environment to intentionally obfuscate decision-making with the aim of ‘laundering’ legal responsibility to evade liability.[27]

Bandla v Solicitors Regulation Authority [2025] EWHC 1167 (Admin)

Former solicitor appeals striking-off roll of solicitors presenting 25 non-existent cases generated by AI.

SW Harber v Commissioners for His Majesty’s Revenue and Customs [2023] UKFTT 1007 (TC)

9 non-existent cases generated by AI in tax appeal.

Ayinde v London Borough of Haringey & Al-Haroun v Qatar National Bank [2025] EWHC 1383 (Admin)

In these two cases, both solicitors were found to have present non-existant AI-generated content resulting in fines for both and SRA referral in Al-Haroun.

(Mis)use of AI

Despite the legal risks, many high-profile cases of duty-holders failing to exercise adequate scrutiny over AI outputs before actioning them has arisen. These scandals have also included, arguably foreseeable, losses to victims through the use of automated decision-making by AI embedded with bias, discrimination and inaccuracy. In 2020, UK A-level grades were inaccurately graded due to bias and inaccurate AI;[28] in 2021 the Dutch government resigned over a scandal caused by AI embedded with racist and discriminatory biases resulting in qualifying families being denied benefits;[29] one of the ‘Big Four’ accountancy firms ‘Deloitte’ has repeatedly faced embarrassment after supplying ‘AI slop’ work-product for high-value government contracts.[30] These are examples where low-quality outputs have fortunately been caught and negligent parties have, to varying degrees, put their hands up. However, as cases arise with more complex supply chains and outputs from multiple parties it may become harder to determine causation and liability. For example, if the Canadian Government had relied upon Deloitte’s report and used their fabricated authorities as an input, perhaps into another tool using AI, which was then made to make a decision on benefits, it would be both time-consuming and costly to establish and apportion liability.

Whilst organisations have been caught using generative AI to create sloppy reports lacking both reasonable care and professional competence, it is possible that the issue is not AI at all. The issue is the misapplication of AI and lack of due diligence from humans relying on it for shortcuts. It is human users who are failing to apply best practices. It is known that Generative AI can hallucinate or make mistakes when using AI- errors and mistakes are foreseeable- therefore outputs should be treated as though it is simply the output of another person and subjected to sufficient scrutiny accordingly. [31] It is known that well-written prompts channelled through the ‘black-box’ environment could potentially generate random outputs and that human-coded AI can reflect biases and discrimination. For these reasons, the role of human in oversight has been stressed by regulators in automated decision-making.[32]

AI Maturity

It is important to recognise that AI is largely in the peak of the ‘hype cycle’ and has not yet reached maturity.

Whilst dialogue around the promise and dangers of AI is omnipresent, many proposed use cases will not be realised in the short term or possibly at all due to rising costs and low return on investment.[33] There is no shortage of use cases which have reached implementation and subsequently rolled back due to low quality, bugs or quite simply lacking purpose.[34]

AI regulation is likely to face similar challenges to broader technology law: regulation and enforcement are hampered by lack of consistency and co-operation with other jurisdictions and libel tourism; online tools designed to circumvent compliance or facilitate misuse. For AI users, given known issues and limitations to subject outputs to strong scrutiny ensuring that duties are met with the relevant standard of care. Organisations should ensure that contractual agreements contain adequate provision for quality assurances and remedies in the event that employees, agents, suppliers, vendors or sub-contractors provide products, services or advice relying on AI: this could be addressed by reaching consensus on an AI agent’s principal and clearly defining this in agreements. Lawmakers should take advantage of any slowdowns to expedite clarification on the points of law and reform legislation and regulation as needed to ensure that the tests of negligence can easily be applied in instances involving AI without the need for extensive costly legal proceedings. From a purely legal perspective, it is possible this could be achieved by through initial ‘top-down’ regulation applying strict liability to the use of AI- with liability relaxing where appropriate once the technology become more well-understood and users become cognisant of the risks involved. However, due to the economic potential of AI, jurisdictions such as the US and UK seem to be leaning into a ‘ground-up’ regulatory approach which may signal that, for now, negligent losses involving the use of AI may be decided on a costly, complex and case-by-case basis.

References

[1] Gartner Inc., ‘Hype Cycle for Artificial Intelligence,2025’, (Gartner Inc., 8 July 2025) < https://www.pasqal.com/resources/new-gartner-hype-cycle-for-ai-report-2025/ Accessed 13 December 2025

[2] Charlie Campbell, Andrew R. Chow and Billy Perrigo, ‘The Architects of AI are TIME’s 2025 Person of the Year’ (Time Magazine, 11 December 2025) https://time.com/7339685/person-of-the-year-2025-ai-architects/ Accessed 13 December 2025

[3] Donald J. Trump, ‘Ensuring a National Policy Framework for Artificial Intelligence’ Executive Order 14365?, (The White House, 11 December 2025) < https://www.whitehouse.gov/presidential-actions/2025/12/eliminating-state-law-obstruction-of-national-artificial-intelligence-policy/ Accessed 13 December 2025

[4] Department for Science, Innovation & Technology, Delivering AI Growth Zones (DSIT, 13 November 2025) < https://www.gov.uk/government/publications/delivering-ai-growth-zones/delivering-ai-growth-zones#introduction Accessed 12 December 2025

[5] Department for Science, Innovation & Technology, AI Opportunities Plan, (DSIT, 13 January 2025) < https://www.gov.uk/government/publications/ai-opportunities-action-plan/ai-opportunities-action-plan Accessed 12 December 2025

[6] BBC, ‘Any more for Moore’s Law?’ (BBC Sounds, 17 April 2025) < https://www.bbc.co.uk/sounds/play/w3ct6yf5 Accessed 15 December 2025

[7] Open Letter, ‘Pause Giant AI Experiments: an Open Letter’ (Future of Life Institute, 22 March 2023) < https://futureoflife.org/open-letter/pause-giant-ai-experiments/ Accessed 14 December 2025

[8] Steven Levy, ‘The Year of ChatGPT and Living Generatively’, (Wired, 1 December 2023) https://www.wired.com/story/plaintext-chatgpt-year-of-living-generatively/ Accessed 12 December 2025

[9] National Audit Office, ‘Use of Artificial Intelligence in Government’, (Department for Science, Innovation & Technology 15 March 2025 HC 612) 2.5

[10] Eric Hazan, ‘Human capital for the age of generative AI’, (McKinsey Global Institute, 30 May 2024) < https://www.mckinsey.com/mgi/media-center/human-capital-for-the-age-of-generative-ai Accessed 13 December 2025; Joshua Rothman, ‘Is AI actually a bubble?’ (The New Yorker, 12 December 2025) < https://www.newyorker.com/culture/open-questions/is-ai-actually-a-bubble?_sp=783ad5d8-5b6e-43c6-bc25-e56543bd55b4.1765637967785 Accessed 13 December 2025

[11] Eric Hazan et al., ‘A new future of work: The race to deploy AI and raise skills in Europe and Beyond’, (McKinsey Global Institute, 21 May 2024) < https://www.mckinsey.com/mgi/our-research/a-new-future-of-work-the-race-to-deploy-ai-and-raise-skills-in-europe-and-beyond#/ Accessed 13 December 2025

[12] Open Letter, ‘Asilomar AI Principles’ (Future of Life Institute, 11 August 2017) https://futureoflife.org/open-letter/ai-principles/ Accessed 14 December 2025

[13] European Parliamentary Research Service, ‘Artificial Intelligence Act’

[14] SW Harber v Commissions for His Majesty’s Revenue and Customs [2023] UKFTT 1007 (TC); Olsen v Finansiel Stabilitet A/S [2025] EWHC 42 (KB); Mata v Avianca Inc Case No. 22-cv-1461 (PKC), 2023 WL 4114965 (SDNY 22 June 2023); Valu v Minister for Immigration and Multicultural Affairs (No 2) [2025] FedCFamC2G 95; Wikeley v Kea Investments Ltd [2024] NZCA 609; Zhang v Chen [2024] BCSC 285; Ayinde v LB Haringey and Al Haroun v Qatar National Bank [2025] EWHC 1383

[15] Bandla v Solicitors Regulation Authority [2025] EWHC 1167 (Admin)

[16] Ray Kurzeil, The Age of Spiritual Machines: When Computers Exceed Human Intelligence, (Published Viking Press 1999) 178-182; Stephen Witt, ‘The A.I. Prompt that could end the world’ (The New York Times, 10 October 2025) https://www.nytimes.com/2025/10/10/opinion/ai-destruction-technology-future.html Accessed 15th December 2025

[17] Yuen Kun Yeu and Others v Attorney-General [1988] LRC (Comm) 763

[18] Health and Safety at Work etc. Act 1974 S2(1)

[19] Bolam v Friern Hospital Management Committee [1957] 1 WLR 582; Bolitho v. City and Hackney Health Authority [1998] AC 232; Penney, Palmer and Canon v East Kent Health Authority [2000] Lloyds Rep Med 41

[20] Donoghue v Stevenson 1932 SC (HL) 31, 44

[21] ANNS AND OTHERS RESPONDENTS AND MERTON LONDON BOROUGH COUNCIL APPELLANTS [1978] A.C. 728; Caparo Industries PLC v Dickman [1990] 2 AC 605

[22] Osman v United Kingdom (1998) 29 EHRR 245

[23] HOME OFFICE APPELLANTS AND DORSET YACHT CO. LTD. RESPONDENTS [ON APPEAL FROM DORSET YACHT CO. LTD. v. HOME OFFICE] [1970] A.C. 1004, 1027

[24] John Munroe (Acrylics) Ltd v London Fire and Civil Defence Authority and others [1997]; McLoughlin v O’Brian [1983] 1 AC 410

[25] Andreas Matthias, ‘The responsibility gap: Ascribing personality for the actions of learning automata’, in Ethics and Information Technology Vol 6 175-183 (Published Kluwer Academic Publishers 2004) < https://link.springer.com/epdf/10.1007/s10676-004-3422-1?sharing_token=VHn-tp7g4Hh3T9zQLkuy_ve4RwlQNchNByi7wbcMAY4TL-kHlb21RP101LiGjOTo8ehg0XOdNxMVhNloMO0R9EId2rRYVcQck1klD7dj4gqlVp6EIqnkp6L-ycarfLhWsE-2cB3UHF6B5JBllkJGwe1Mlpj2yMWzQ2sQOQr7cgs%3D Access 13 December 2025

[26] Andreas Matthias, ‘The responsibility gap: Ascribing personality for the actions of learning automata’, in Ethics and Information Technology Vol 6 175-183 (Published Kluwer Academic Publishers 2004) < [https://link.springer.com/epdf/10.1007/s10676-004-3422-1?sharing_token=VHn-tp7g4Hh3T9zQLkuy_ve4RwlQNchNByi7wbcMAY4TL-kHlb21RP101LiGjOTo8ehg0XOdNxMVhNloMO0R9EId2rRYVcQck1klD7dj4gqlVp6EIqnkp6L-ycarfLhWsE-2cB3UHF6B5JBllkJGwe1Mlpj2yMWzQ2sQOQr7cgs%3D) Access 13 December 2025

[27] Alan Rubel, Clinton Castro and Adam Pham, ‘Agency Laundering and Information Technologies’, Emanuela Ceva, Lubomira Radoilska in Ethical and Moral Practice Vol 22 (Published Springer Nature Press 24 October 2019) 1017-1041 < https://link.springer.com/article/10.1007/s10677-019-10030-w Accessed 13 December 2025

[28] Daan Kolkman, ‘F**k the algorithm”?: What the world can learn from the UK’s A-level grading fiasco’ (LSE 26 August 2020) https://blogs.lse.ac.uk/impactofsocialsciences/2020/08/26/fk-the-algorithm-what-the-world-can-learn-from-the-uks-a-level-grading-fiasco/ Accessed 14 December 2025

[29] Gijs van Maanen and Daan Kolkman, ‘AI decision making doesn’t need to be explainable, but it should be responsive’ (LSE 6 January 2025) < https://blogs.lse.ac.uk/impactofsocialsciences/2025/01/06/ai-decision-making-doesnt-need-to-be-explainable-but-it-should-be-responsive/ Accessed 14 December 2025; Parliamentary Question to European Parliament, ‘The Dutch childcare benefit scandal, institutional racism and algorithms’ (EUP 2022) <https://www.europarl.europa.eu/doceo/document/O-9-2022-000028_EN.html Accessed 14 December 2025

[30] Nina Paoli, ‘Deloitte allegedly cited AI-generated research in a million-dollar report for a Canadian provincial government’ (Fortune 25 November 2025) < https://fortune.com/2025/11/25/deloitte-caught-fabricated-ai-generated-research-million-dollar-report-canada-government/ Accessed 14 December 2025; Krishani Dhajni, ‘Deloitte to pay money back to Albanese government after using AI in $440,000 report’ (The Guardian, 6 October 2025) < https://www.theguardian.com/australia-news/2025/oct/06/deloitte-to-pay-money-back-to-albanese-government-after-using-ai-in-440000-report Accessed 14 December 2025;

[31] Information Commissioner’s Office, ‘How do we ensure individual rights in our AI systems?’ (ICO 15 March 2023) https://ico.org.uk/for-organisations/uk-gdpr-guidance-and-resources/artificial-intelligence/guidance-on-ai-and-data-protection/ Accessed 11 December 2025

[32] Gartner Inc., ‘Gartner Predicts Over 40% of Agentic AI Projects Will Be Cancelled by End of 2027’ (Gartner INC, 25 June 2025) < https://www.gartner.com/en/newsroom/press-releases/2025-06-25-gartner-predicts-over-40-percent-of-agentic-ai-projects-will-be-canceled-by-end-of-2027 Accessed 13 December 2025

[33] Jack Dyson, ‘ “Embarrassing”: AI attendance reports suspended days after launch’ (Schools Week, 17 November 2025) < https://schoolsweek.co.uk/embarrassing-ai-attendance-reports-suspended-days-after-launch/ Accessed 13 December 2025; Troy Giggs and Daisuke Wakabayashi, ‘How a self-driving Uber killed a pedestrian in Arizona (New York Times, 21 March 2018) https://www.nytimes.com/interactive/2018/03/20/us/self-driving-uber-pedestrian-killed.html Accessed 13 December 2025